-

Keras Cat Dog 분류 - 9. 딥러닝 시작하기 - 과대적합2주제를 딱히 정하기 싫을때 2019. 6. 14. 01:58

설치 부터 실제 분류까지 keras로

Cat과 Dog 데이터 셋으로 끝까지 해보기

2019/06/13 - [주제를 딱히 정하기 싫을때] - Keras Cat Dog 분류 - 8. 딥러닝 시작하기 - 과대적합

Keras Cat Dog 분류 - 8. 딥러닝 시작하기 - 과대적합

설치 부터 실제 분류까지 keras로 Cat과 Dog 데이터 셋으로 끝까지 해보기 2019/06/13 - [주제를 딱히 정하기 싫을때] - Keras Cat Dog 분류 - 7. 딥러닝 시작하기 - 텐션보드 사용하기 Keras Cat Dog 분류 - 7...

redapply.tistory.com

12 - ImageDataGenerator

-

훈련 데이터를 증식을 시도 한다

-

검증데이터, 테스트 데이터는 절대 증식 금지!!

-

변경 데이터

#프로젝트 이름

project_name = 'dog_cat_CNN_api3_datagen_model'

#훈련데이터 데이터 증식

train_datagen = ImageDataGenerator( rescale = 1./255, rotation_range=40, width_shift_range=0.2, height_shift_range=0.2, shear_range=0.2, horizontal_flip=True, fill_mode='nearest')-

전체 코드

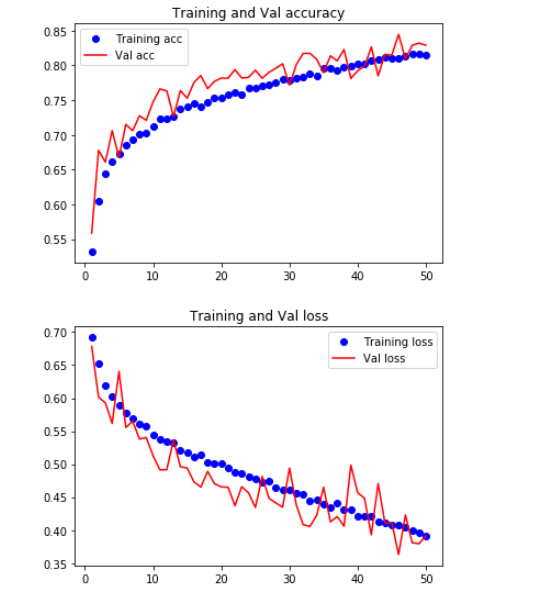

from datetime import datetime import os import keras save_dir = './my_log' if not os.path.isdir(save_dir): os.makedirs(save_dir) project_name = 'dog_cat_CNN_api3_datagen_model' def save_file(): time = datetime.today() yy = time.year mon = time.month dd = time.day hh = time.hour mm = time.minute sec = time.second time_name = str(yy) + str(mon) + str(dd) + str(hh) + str(mm) + str(sec) +'_my_' + project_name + '_model.h5' file_name = os.path.join(save_dir,time_name) return file_name callbacks = [ keras.callbacks.TensorBoard( log_dir = save_dir, write_graph=True, write_images=True ), keras.callbacks.EarlyStopping( monitor = 'val_acc', patience=10, ), keras.callbacks.ModelCheckpoint( filepath= save_file(), monitor = 'val_loss', save_best_only = True, ) ] from keras import Input from keras import layers ,models, losses ,optimizers batch_size = 256 no_classes = 1 epochs = 50 image_height, image_width = 150,150 input_shape = (image_height,image_width,3) def cnn_api3(input_shape): input_tensor =Input(input_shape, name = "input") x = layers.Conv2D(filters= 32 ,kernel_size= (3,3) , padding= "same", activation='relu')(input_tensor) x = layers.Conv2D(filters= 32 ,kernel_size= (3,3) , padding= "same", activation='relu')(x) x = layers.MaxPooling2D(pool_size=(2,2))(x) x = layers.Dropout(rate=0.25)(x) x = layers.Conv2D(filters= 64 ,kernel_size= (3,3) , padding= "same", activation='relu')(x) x = layers.Conv2D(filters= 64 ,kernel_size= (3,3) , padding= "same", activation='relu')(x) x = layers.MaxPooling2D(pool_size=(2,2))(x) x = layers.Dropout(rate=0.25)(x) x = layers.Conv2D(filters= 128 ,kernel_size= (3,3) , padding= "same", activation='relu')(x) x = layers.Conv2D(filters= 128 ,kernel_size= (3,3) , padding= "same", activation='relu')(x) x = layers.MaxPooling2D(pool_size= (2,2))(x) x = layers.Dropout(rate= 0.25)(x) x = layers.Flatten()(x) x = layers.Dense(units= 1024 , activation='relu')(x) x = layers.Dropout(rate= 0.25)(x) output_tensor = layers.Dense(units= no_classes, activation= 'sigmoid', name= "output")(x) model = models.Model([input_tensor],[output_tensor]) model.compile(loss = losses.binary_crossentropy, optimizer= optimizers.RMSprop(lr=0.0001), metrics=['acc']) return model from keras.preprocessing.image import ImageDataGenerator from PIL import Image train_datagen = ImageDataGenerator( rescale = 1./255, rotation_range=40, width_shift_range=0.2, height_shift_range=0.2, shear_range=0.2, horizontal_flip=True, fill_mode='nearest') val_datagen = ImageDataGenerator(rescale = 1./255) # 검증데이터 스케일 조정만 합니다. train_generator = train_datagen.flow_from_directory( os.path.join(copy_train_path,"train"), target_size = (image_height, image_height), batch_size = batch_size, class_mode = "binary" ) validation_generator = val_datagen.flow_from_directory( os.path.join(copy_train_path,"validation"), target_size = (image_height, image_height), batch_size = batch_size, class_mode = "binary" ) newType_model = cnn_api3(input_shape) hist = newType_model.fit_generator(train_generator, steps_per_epoch = 20000//batch_size, epochs= epochs, validation_data = validation_generator, validation_steps = 5000//batch_size, callbacks = callbacks) import matplotlib.pyplot as plt train_acc = hist.history['acc'] val_acc = hist.history['val_acc'] train_loss = hist.history['loss'] val_loss = hist.history['val_loss'] epochs = range(1,len(train_acc)+1) plt.plot(epochs,train_acc,'bo',label='Training acc') plt.plot(epochs,val_acc,'r',label='Val acc') plt.title('Training and Val accuracy') plt.legend() plt.figure() plt.plot(epochs,train_loss,'bo',label='Training loss') plt.plot(epochs,val_loss,'r',label='Val loss') plt.title('Training and Val loss') plt.legend() plt.show()

데이터 증식 덕분에 과대 적합은 많이 줄여지만

정확성은 아직 부족합니다.

더 나은 모델을 사용해 보겠습니다.

-

이미 구성된 모델 가져오기

-

xception 모델

-

이미 가중치가 적용된 모델을 사용합니다.

-

include_top = False() 마지막 Dense레이어를 포함 여부를 물어봅니다.

-

여기에선 출력이 한개가 필요하므로 Dense부분만 모델에 추가합니다.

-

변경된 모델(Xception)

from keras.applications import Xception def xception_Model(input_shape): xceptionmodel = Xception(input_shape=input_shape, weights='imagenet', include_top=False) model = models.Sequential() model.add(xceptionmodel) model.add(layers.Flatten()) model.add(layers.Dense(units=256, activation="relu")) model.add(layers.Dense(units=no_classes, activation="sigmoid")) model.compile(loss=losses.binary_crossentropy, optimizer=optimizers.RMSprop(), metrics=['acc']) return model-

변경된 내용

project_name = 'dog_cat_xception_datagen_model'

newType_model = xception_Model(input_shape)

-

전체코드

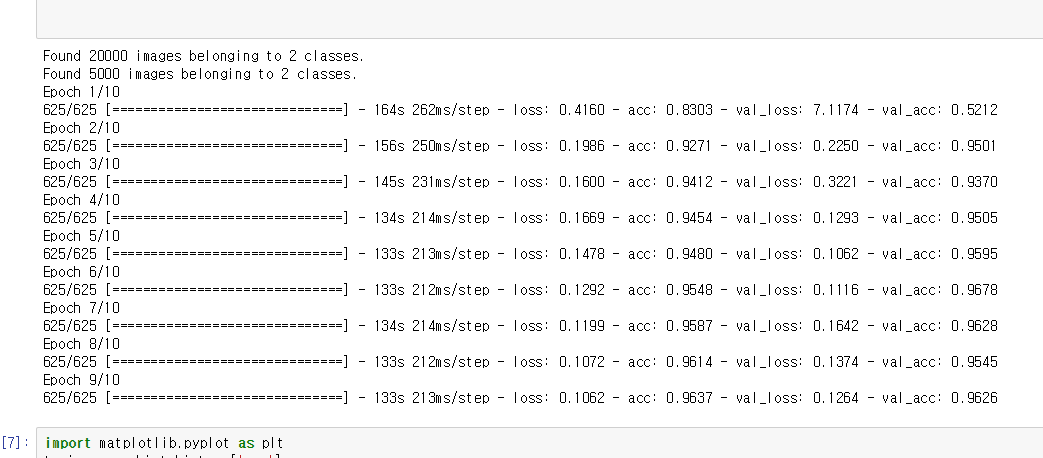

from datetime import datetime import os import keras save_dir = './my_log' if not os.path.isdir(save_dir): os.makedirs(save_dir) project_name = 'dog_cat_xception_datagen_model' def save_file(): time = datetime.today() yy = time.year mon = time.month dd = time.day hh = time.hour mm = time.minute sec = time.second time_name = str(yy) + str(mon) + str(dd) + str(hh) + str(mm) + str(sec) +'_my_' + project_name + '_model.h5' file_name = os.path.join(save_dir,time_name) return file_name callbacks = [ keras.callbacks.TensorBoard( log_dir = save_dir, write_graph=True, write_images=True ), keras.callbacks.EarlyStopping( monitor = 'val_acc', patience=3, ), keras.callbacks.ModelCheckpoint( filepath= save_file(), monitor = 'val_loss', save_best_only = True, ) ] from keras import Input from keras import layers ,models, losses ,optimizers batch_size = 32 no_classes = 1 epochs = 10 image_height, image_width = 150,150 input_shape = (image_height,image_width,3) from keras.applications import Xception def xception_Model(input_shape): xceptionmodel = Xception(input_shape=input_shape, weights='imagenet', include_top=False) model = models.Sequential() model.add(xceptionmodel) model.add(layers.Flatten()) model.add(layers.Dense(units=256, activation="relu")) model.add(layers.Dense(units=no_classes, activation="sigmoid")) model.compile(loss=losses.binary_crossentropy, optimizer=optimizers.RMSprop(), metrics=['acc']) return model from keras.preprocessing.image import ImageDataGenerator from PIL import Image train_datagen = ImageDataGenerator( rescale = 1./255, rotation_range=40, width_shift_range=0.2, height_shift_range=0.2, shear_range=0.2, horizontal_flip=True, fill_mode='nearest') val_datagen = ImageDataGenerator(rescale = 1./255) # 검증데이터 스케일 조정만 합니다. train_generator = train_datagen.flow_from_directory( os.path.join(copy_train_path,"train"), target_size = (image_height, image_height), batch_size = batch_size, class_mode = "binary" ) validation_generator = val_datagen.flow_from_directory( os.path.join(copy_train_path,"validation"), target_size = (image_height, image_height), batch_size = batch_size, class_mode = "binary" ) newType_model = xception_Model(input_shape) hist = newType_model.fit_generator(train_generator, steps_per_epoch = 20000//batch_size, epochs= epochs, validation_data = validation_generator, validation_steps = 5000//batch_size, callbacks = callbacks) import matplotlib.pyplot as plt train_acc = hist.history['acc'] val_acc = hist.history['val_acc'] train_loss = hist.history['loss'] val_loss = hist.history['val_loss'] epochs = range(1,len(train_acc)+1) plt.plot(epochs,train_acc,'bo',label='Training acc') plt.plot(epochs,val_acc,'r',label='Val acc') plt.title('Training and Val accuracy') plt.legend() plt.figure() plt.plot(epochs,train_loss,'bo',label='Training loss') plt.plot(epochs,val_loss,'r',label='Val loss') plt.title('Training and Val loss') plt.legend() plt.show()

와우 !!

정확도가 95%에 달하는 군요

이전 모델 보다 훨씬 안정적이고 정확성이 높습니다.

이 모델을 사용하겠습니다.

'주제를 딱히 정하기 싫을때' 카테고리의 다른 글

Keras Cat Dog 분류 - 10. 딥러닝 시작하기 - 테스트 사진 분류하기 -마지막 시간!! (0) 2019.06.14 Keras Cat Dog 분류 - 8. 딥러닝 시작하기 - 과대적합 (0) 2019.06.13 Keras Cat Dog 분류 - 7. 딥러닝 시작하기 - 텐션보드 사용하기 (0) 2019.06.13 Keras Cat Dog 분류 - 6. 딥러닝 시작하기 - 모델 구성 (0) 2019.06.13 Keras Cat Dog 분류 - 5. 딥러닝 시작하기 - 콜백 함수 (0) 2019.06.13 -